UFABET เว็บพนันออนไลน์ สล็อต บอล หวย มวย คาสิโน ครบวงจร

ในปี 2026 เว็บไซต์เดิมพัน UFABET ยังคงครองตำแหน่ง เว็บพนันอันดับ 1 ในไทย ได้อย่างต่อเนื่อง จากมีการพัฒนาแพลตฟอร์มอย่างสม่ำเสมอ ทั้งในด้านเทคโนโลยี และระบบความปลอดภัย และประสบการณ์ผู้ใช้งาน จุดเด่นสำคัญคือระบบที่รองรับการเดิมพันได้หลากหลาย เช่น แทงบอลออนไลน์ บาคาร่าออนไลน์ และ ประเภทการเดิมพันที่ครนอบคลุมเกือบทุกประเภท ที่ได้รับความนิยมสูง อีกทั้งยังมีอัตราต่อรองที่ดีและอัปเดตราคาแบบสดๆต่อเนื่อง ทำให้นักเดิมพันสามารถวางแผนได้อย่างแม่นยำ ระบบ UFABET ยังเป็นที่ยอมรับในระดับสากลส่งผลให้ผู้เล่นจำนวนมากไว้วางใจและเลือกใช้บริการอย่างต่อเนื่อง

UFABET ถูกยกให้เป็น เว็บพนันอันดับ 1 ในไทยปี 2026

UFABET ยังคงครองตำแหน่งเว็บพนันออนไลน์อันดับ 1 ในประเทศไทยปี 2026 อย่างแข็งแกร่ง ด้วยมาตรฐานการให้บริการที่เหนือกว่า ทั้งในด้านความปลอดภัย ระบบที่เสถียร และความหลากหลายของเกมที่ตอบโจทย์ผู้เล่นทุกระดับ ไม่ว่าจะเป็นคาสิโนสด หรือเกมเดิมพันยอดนิยมอื่นๆ ถูกคัดสรรมาอย่างมีคุณภาพ พร้อมรองรับการใช้งานผ่านมือถือและคอมพิวเตอร์ได้อย่างลื่นไหล ทำให้ผู้ใช้งานสามารถเข้าถึงความบันเทิงได้ทุกที่ทุกเวลา และในปี 2026 ยูฟ่าเบทยังมอบเครดิตฟรีโปรโมชั่นให้จัดเต็มตลอดปี และคืนยอดเสียสูงสุดถึง 5 %

สมัคร UFABET กับเว็บตรง ไม่ผ่านเอเย่นต์ สมัครฟรี

สมัคร UFABET เว็บตรง ไม่ผ่านเอเย่นต์ ถือเป็นทางเลือกที่ช่วยเพิ่มความมั่นใจให้กับผู้เล่นได้อย่างมากโดยเฉพาะด้านความทันสมัยและ โปร่งใสในการให้บริการ ผู้ที่ต้องการ สมัคร ยูฟ่า ฟรี สามารถดำเนินการได้ง่าย ใช้เวลาเพียงไม่กี่นาทีก็สามารถเข้าใช้งานระบบเดิมพันได้ทันทีจุดเด่นของการเลือกใช้ UFA เว็บตรง คือการได้รับบริการจากผู้ให้บริการโดยตรง ไม่ต้องกังวลเรื่องการโดนหักยอดหรือปัญหาการถอนเงินล่าช้า

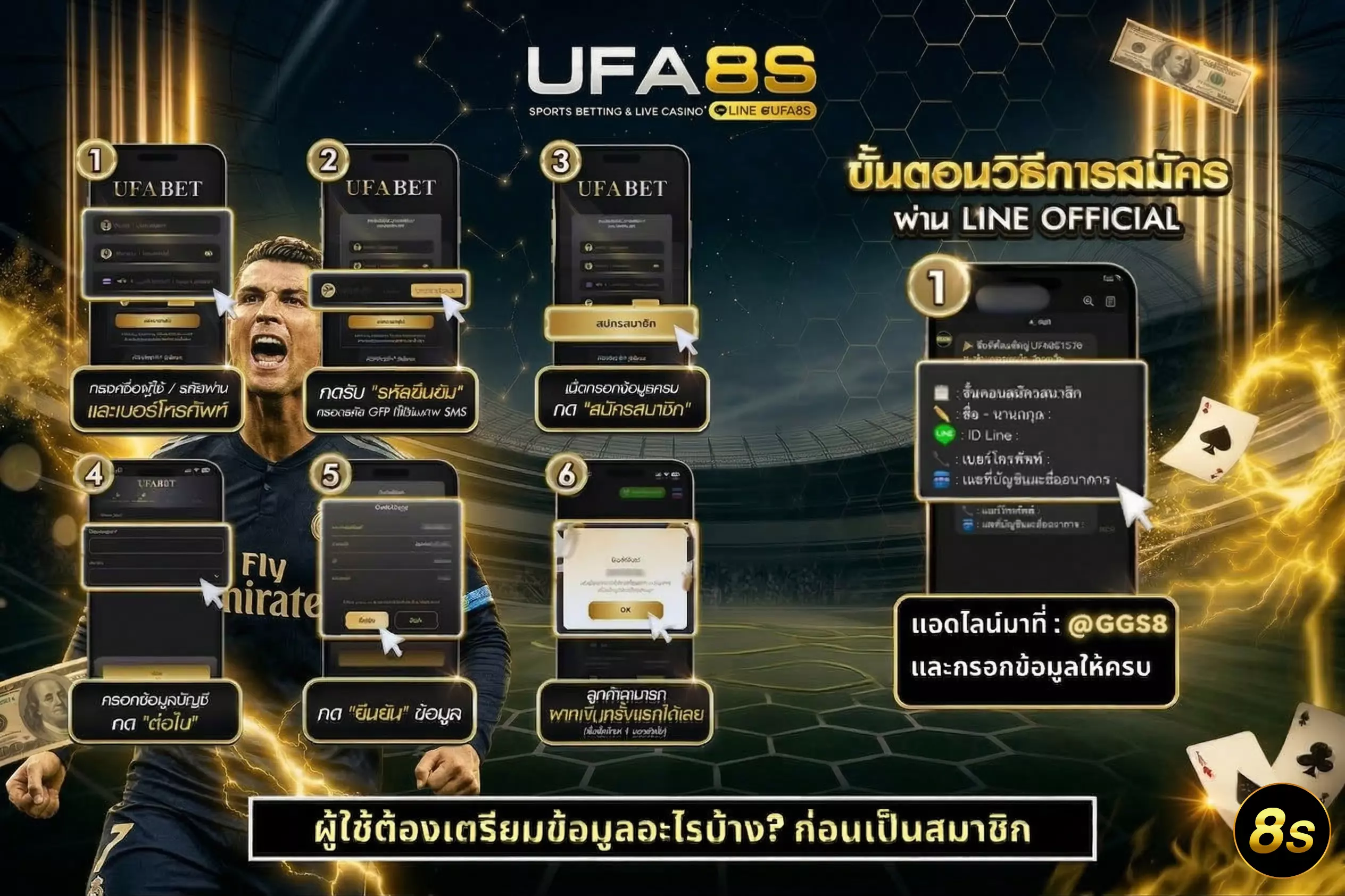

การสมัครเว็บพนันออนไลน์ ยูฟ่าเบท ทำได้สะดวกมากยิ่งขึ้น โดยผู้ใช้งานสามารถเลือกสมัครผ่านหน้าเว็บไซต์หลักได้โดยตรง ซึ่งขั้นตอนการ สมัคร UFABET ฟรี นั้นไม่ซับซ้อน เพียงกรอกข้อมูลพื้นฐานไม่กี่ขั้นตอน ก็สามารถเริ่มต้นใช้งานและเข้าสู่ระบบเดิมพันได้ทันทีอีกหนึ่งช่องทางยอดนิยมคือการสมัครผ่าน LINE Official ของ UFABET LINE : @GGS8 ซึ่งช่วยเพิ่มความสะดวกสำหรับผู้ที่ต้องการคำแนะนำแบบใกล้ชิด ผู้สมัครสามารถสอบถามขั้นตอนต่างๆ ได้แบบต่อเนื่องทันที

UFABET ฝาก-ถอน ระบบออโต้ ไม่มีขั้นต่ำ รองรับทุกธนาคารและทรูวอลเล็ต

ระบบฝาก-ถอนออโต้ บน UFABET เว็บแม่ ถูกพัฒนาให้ตอบโจทย์ผู้เล่นยุคใหม่ที่ต้องการความรวดเร็วและสะดวกสบายสูงสุด โดยผู้ใช้งานสามารถทำรายการ ฝากเงิน UFABET และ ถอนเงิน UFABET ได้ด้วยตนเองผ่านระบบอัตโนมัติ ใช้เวลาเพียงไม่กี่วินาทีเงินก็จะเข้าสู่บัญชีเกมหรือบัญชีธนาคารทันที โดยที่ไม่ต้องรอนาน จุดเด่นสำคัญคือไม่มีขั้นต่ำในการทำรายการทำให้ผู้เล่นสามารถบริหารงบประมาณได้อย่างยืดหยุ่น ไม่ว่าจะเป็นมือใหม่หรือมืออาชีพก็ใช้งานได้อย่างสบายใจ ระบบฝาก-ถอน ยูฟ่าเบท ยังรองรับการทำธุรกรรมผ่านทุกธนาคารชั้นนำ รวมถึงทรูวอลเล็ต ที่กำลังได้รับความนิยมอย่างมากในปัจจุบัน ช่วยเพิ่มความสะดวกให้กับผู้ที่ต้องการทำรายการผ่านมือถือโดยไม่ต้องใช้บัญชีธนาคารโดยตรง

บนเว็บไซต์ UFABET มีบริการอะไรบ้าง ?

UFABET เว็บไซต์สำหรับเดิมพันกีฬาและคาสิโนออนไลน์ที่ได้รวบรวมบริการยอดนิยมไว้รองรับผู้ใช้งานที่มีความสนใจ เว็บไซต์นี้ภูกเข้าใช้งานมากที่สุดผ่าน UFABET.com สะดวกทั้งบนมือถือและทุกเครือข่าย พร้อมเมนูที่ชัดเจน ช่วยให้ผู้เล่นเลือกใช้งานได้ตรงความต้องการ ความสนุกสุดมันส์ประสบการณ์ที่ไม่เหมือนใครให้พบเจอกันแล้ว ไม่ว่าจะเป็นแฟนกีฬาที่ต้องการวางเดิมพันการแข่งขันฟุตบอล บาสเก็ตบอล หรือกีฬายอดนิยมอื่น ๆ หรือชื่นชอบการเล่นเกมคาสิโนที่มีหลากหลายรูปแบบ UFABET เว็บตรง ก็พร้อมมอบทุกสิ่งที่คุณต้องการเดิมพัน

แทงบอล

แทงบอลบน UFABET บริการที่ได้รับความนิยมสูงสุด โดยเว็บไซต์ UFABET.com รวบรวมทุกการแข่งขันฟุตบอลจากทั่วโลกทั้งลีกใหญ่และลีกเล็ก ให้สามารถเดิมพันได้ครบทุกแมตช์ ไม่ว่าจะเป็นลีกชั้นนำหรือแม้แต่ฟุตบอลโลกและยูโร รวมไปถึงการแข่งขันในระดับทวีปและทัวร์นาเมนต์ใหญ่ ๆ อย่างเช่น แชมเปี้ยนส์ลีกและโคปา อเมริกา

คาสิโนออนไลน์

UFABET นำเสนอประสบการณ์คาสิโนสดที่เสมือนคุณนั่งอยู่ในคาสิโนจริง ผ่านระบบถ่ายทอดสดที่คมชัดและมีความเสถียรสูง คุณสามารถเล่นเกมคาสิโนยอดนิยมต่าง ๆ อย่างเช่น บาคาร่า รูเล็ต ไฮโล เสือมังกร และเกมอื่น ๆ แบบเรียลไทม์ ที่มีดีลเลอร์มืออาชีพคอยให้บริการตลอดการเล่น โดยสามารถเลือกเล่นห้องเกมที่เหมาะสมกับการเดิมพันของคุณได้

หวย

หวยออนไลน์บน UFABET เป็นบริการที่ได้รับความนิยมสูง ด้วยการมีหวยหลากหลายประเภทให้เลือกเล่น ไม่ว่าจะเป็นหวยรัฐบาลไทย หวยยี่กี หวยลาว หวยฮานอย หรือหวยหุ้นจากประเทศต่าง ๆ ซึ่งมีการออกผลอย่างรวดเร็วและสามารถเข้าร่วมได้ตลอดทั้งวัน โดยเฉพาะหวยยี่กีที่สามารถเล่นได้มากถึง 88 รอบต่อวัน

สล็อต

สล็อตเป็นเกมที่มีความสนุกสนานและเล่นง่ายที่สุดเกมหนึ่ง UFABET ได้นำเสนอเกมสล็อตออนไลน์ที่มีให้เลือกมากกว่า 1,000 เกมจากค่ายเกมชั้นนำทั่วโลก รวมถึงเกมที่มีการจ่ายรางวัลที่สูงและฟีเจอร์พิเศษ เช่น การหมุนฟรี (Free Spins), ตัวคูณรางวัล (Multipliers), และเกมโบนัสที่ช่วยเพิ่มโอกาสในการชนะ

บาคาร่า

บาคาร่าเป็นเกมไพ่ที่ได้รับความนิยมสูงในคาสิโนออนไลน์ และ UFABET ก็ไม่พลาดที่จะให้บริการเกมนี้อย่างเต็มรูปแบบ ผู้เล่นสามารถเลือกเดิมพันได้ทั้งฝั่งผู้เล่น (Player) และฝั่งเจ้ามือ (Banker) หรือเดิมพันเสมอ (Tie) ตามกฎกติกาพื้นฐานที่เข้าใจง่ายและเล่นสนุก ที่ UFABET จะได้สัมผัสกับประสบการณ์การเล่นบาคาร่าที่เหมือนกับการเล่นในคาสิโนจริง นอกจากนี้ยังมีรูปแบบบาคาร่าหลายประเภทให้เลือก ซึ่งทำให้ผู้เล่นสามารถเลือกเล่นตามสไตล์และความชอบได้อย่างเต็มที่

10 เว็บยูฟ่าเบท ใน บริษัท UFA ชัวร์และน่าเชื่อถือที่สุด

หากจะพูดถึงเว็บพนันออนไลน์ในปัจจุบัน ชื่อของ UFA ถือเป็นชื่อแรกที่นักพนันไม่ว่าจะสายไหนก็ตาม ต้องรู้จักและพูดถึงอยู่เป็นประจำ และรู้จักกันเป็นอย่างดีอยู่แล้ว และในเวลานี้เว็บ ยูฟ่าเบท ก็ได้เติบโตอย่างต่อเนื่อง จนได้มีการแบ่งย่อยเว็บออกมาอีกมากมาย ซึ่งแต่ละเว็บก็เป็นเว็บตรง ไม่ผ่านเอเย่นต์ มีความน่าเชื่อถือแบบเต็ม 100% ไม่ว่าจะเล่นเว็บไหนก็ไว้วางใจได้เลยว่า จะมีความปลอดภัย และเล่นพนันออนไลน์ได้อย่างเต็มที่ทุกเกมที่คุณต้องการ โดยเว็บในบริษัทของ UFA ที่ไว้ใจได้ เชื่อถือได้ที่เราจะยกตัวอย่าง มีดังต่อไปนี้

- UFA168

- UFAKICK

- UFAPLUS

- UFA289

- UFA365

- UFANANCE

- UFADEAL

- UFA350

- UFAC4

- UFASNAKE

โดยแต่ละเว็บที่ได้เรายกตัวอย่างไปนั้น เป็นเพียงแค่ 10 เว็บที่เราอยากแนะนำเท่านั้น ยังมีเว็บในเครือ UFA อีกหลายเว็บที่ได้รับการยอมรับว่าเป็นเว็บที่ดีที่สุด สำหรับตลาดการพนันออนไลน์ในยุคนี้

UFABET เว็บหลัก ตอบโจทย์กว่าเว็บเอเย่นต์อย่างไร

สำหรับคำว่า เว็บหลัก หรือที่นักพนันหลายคนคุ้นเคยในชื่อ เว็บตรง เป็นอีกหนึ่งสิ่งสำคัญที่นักพนันออนไลน์ไม่ควรมองข้าม เพราะคำๆ นี้นั้นหมายถึง การที่คุณจะได้ใช้บริการกับเว็บแม่โดยตรง แบบที่ไม่ต้องผ่านคนกลางเหมือนเว็บเอเย่นต์ ที่ต้องทำรายการต่างๆ ผ่านบุคคลที่สามทำให้มีความเสี่ยงที่มากกว่า ในการใช้งานผ่านเว็บตรงจะส่งผลให้การใช้งานของสมาชิก จะเป็นไปอย่างปลอดภัยทุกส่วน ไล่ตั้งแต่การสมัครสมาชิก, ฝากถอน และการเข้าเล่นเกมต่างๆ ทำให้สมาชิกได้มองเห็นว่า คุณกำลังได้ใช้งานเว็บพนันที่ซื่อตรงต่อตัวคุณมากที่สุด จะไม่มีการโกงเงิน ไม่มีการปิดเว็บหนี เครดิตทุกบาทของคุณจะได้ใช้งานอย่างคุ้มค่าทุกบาท ทุกสตางค์ และที่สำคัญเมื่อได้กำไรแล้ว สามารถถอนเงินได้ทันที และถอนได้ครบตามจำนวนที่คุณต้องการอย่างแท้จริง

รวมโปรโมชั่นบน UFABET ในปีนี้ ครบ คุ้มค่า จัดเต็ม เครดิตฟรีเพียบ

เว็บไซต์ UFABET ได้ยกระดับความคุ้มค่าให้กับผู้เล่นมากยิ่งขึ้น ด้วยการออกโปรโมชั่นที่หลากหลายและตอบโจทย์ทุกสายการเดิมพัน ทำให้ผู้เล่นสามารถเริ่มต้นได้อย่างมั่นใจสำหรับใครที่กำลังมองหา เว็บ UFABET ที่ดีที่สุด หรือสนใจ โปรโมชั่นต่างๆ หนึ่งในโปรโมชั่นยอดนิยมที่ถูกพูดถึงมากที่สุดคือ เครดิตฟรี UFABET ซึ่งมักมาในรูปแบบของโบนัสต้อนรับสำหรับสมาชิกใหม่ เพียงสมัครสมาชิกและทำตามเงื่อนไขเล็กน้อย ก็สามารถรับเครดิตฟรีไปทดลองเล่นได้ทันที โดยไม่ต้องฝากก่อน ถือเป็นโอกาสที่ดีในการทดลองระบบ UFABET เว็บตรง โปรโมชั่นคืนยอดเสีย ก็เป็นอีกหนึ่งโปรโมชั่น ที่ทำให้ UFABET ได้รับความนิยมมากกว่าเว็บอื่นๆ โดยผู้เล่นสามารถรับเงินคืนได้แม้ในวันที่เสีย ช่วยลดความเสี่ยงและเพิ่มโอกาสในการเล่นต่อได้มั่นคง

เดิมพันคาสิโนออนไลน์ ยูฟ่าเบท บน มือถือ รองรับทุกระบบ Ios และ Android

เล่นคาสิโนออนไลน์ ยูฟ่าเบท บนมือถือ ถูกออกแบบมาเพื่อรองรับการใช้งานทั้งระบบ iOS และ Android ได้อย่างเต็มประสิทธิภาพ โดยคุณสามารถเข้าใช้งานผ่านเว็บเบราว์เซอร์ได้ทันทีโดยไม่ต้องติดตั้งแอปเพิ่มเติม รองรับการแสดงผลทุกขนาดหน้าจอ ทำให้การใช้งานมีความลื่นไหลและตอบสนองได้รวดเร็ว ระบบมีการปรับแต่งให้เข้ากับแต่ละระบบ ช่วยลดปัญหาความไม่เข้ากันของอุปกรณ์ และรองรับเวอร์ชันที่หลากหลายได้อย่างต่อเนื่อง ไม่ว่าจะใช้งานผ่านเครือข่าย Wi-Fi หรืออินเทอร์เน็ตมือถือก็สามารถเชื่อมต่อได้อย่างเสถียร อีกทั้งยังมีการพัฒนาโครงสร้างเว็บไซต์ให้โหลดไว ใช้งานง่าย และไม่เกิดอาการค้างระหว่างเล่น ทำให้คุณสามารถเดิมพันผ่านมือถือได้อย่างราบรื่นในทุกสถานการณ์

สรุปความเป็นมาของ UFABET จากจุดเริ่มต้นสู่เว็บเดิมพันยอดนิยมสูงสุดในไทย

UFABET เริ่มต้นจากการเป็นผู้ให้บริการ เว็บพนันออนไลน์ ที่ให้ความสำคัญไปที่ความทันสมัยและมาตรฐานระดับโลก โดยเราพัฒนาแบรนด์ให้รองรับผู้ใช้งานทั่วโลกผ่านระบบที่มีเสถียรภาพสูง ใช้งานง่าย และปลอดภัย จุดเปลี่ยนสำคัญที่ทำให้ ยูฟ่าเบท เติบโตอย่างรวดเร็ว คือการนำเทคโนโลยีเข้ามาปรับใช้กับระบบการเดิมพัน ส่งผลให้ผู้ใช้บริการสามารถเข้าถึงบริการต่างๆ ได้อย่างสะดวก รวดเร็วตลอดระยะเวลาที่ผ่านมา เว็บพนัน UFABET ได้ขยายบริการอย่างต่อเนื่อง ครอบคลุมทั้งกีฬา คาสิโน สล็อต และเกมเดิมพันหลากหลายประเภท พร้อมทั้งพัฒนาเครือข่ายพันธมิตรและเว็บไซต์ในเครือให้มีมาตรฐานเดียวกัน จนกลายเป็นหนึ่งในแบรนด์ที่ได้รับความนิยมสูงในประเทศไทย ความน่าเชื่อถือของ เว็บยูฟ่าเบท ไม่ได้เกิดจากเพียงชื่อเสียงเท่านั้น แต่ยังมาจากคุณภาพการให้บริการ ความโปร่งใส และการพัฒนาระบบอย่างต่อเนื่อง จึงทำให้ยังคงรักษาตำแหน่งเว็บเดิมพันยอดนิยม และเป็นตัวเลือกอันดับต้นๆ ของผู้เล่นที่ต้องการประสบการณ์การเดิมพันที่ครบวงจรในปี 2026

FAQ แทงบอล UFABET รวมคำถามที่พบบ่อย อัปเดตล่าสุด 2026

สมัครสมาชิก UFABET ต้องทำอย่างไร?

สมัครสมาชิก UFABET เว็บตรงทำได้ง่าย เพียงกรอกข้อมูลพื้นฐาน ยืนยันตัวตน และตั้งรหัสผ่าน ระบบอนุมัติเร็ว รองรับมือถือและคอมพิวเตอร์

ฝาก–ถอนเงิน UFABET ต้องทำอย่างไร?

ระบบฝาก–ถอน UFABET เป็นแบบอัตโนมัติ ไม่มีขั้นต่ำ รองรับทุกธนาคารไทยและ TrueMoney Wallet ทำรายการได้ 24 ชั่วโมง

ตรวจสอบบิลเดิมพันได้อย่างไร?

สมาชิกสามารถเข้าเมนู “ประวัติการเดิมพัน” เพื่อตรวจสอบบิลย้อนหลัง เช็กสถานะการเดิมพัน และยอดเงินได้ทันที

สามารถแก้ไขข้อมูลบัญชีได้หรือไม่?

สมาชิกสามารถเปลี่ยนรหัสผ่าน อัปเดตข้อมูลส่วนตัว และตั้งค่าการแจ้งเตือนได้อย่างปลอดภัยผ่านหน้าโปรไฟล์ ลดความเสี่ยงจากบัญชีถูกเข้าถึงโดยบุคคลภายนอก

โปรโมชั่นและโบนัส UFABET มีอะไรบ้าง?

UFABET มีโปรโมชั่นหลากหลาย เช่น โบนัสต้อนรับสมาชิกใหม่ คืนยอดเสีย และกิจกรรมพิเศษประจำเดือน ข้อมูลอัปเดตล่าสุดปี 2026

ระบบ UFABET ปลอดภัยหรือไม่?

เว็บตรง UFABET มีมาตรฐานสากล ใช้ระบบเข้ารหัสข้อมูลและตรวจสอบความปลอดภัยของบัญชีสมาชิกทุกขั้นตอน สมาชิกสามารถตรวจสอบประวัติการเดิมพันย้อนหลังได้ตลอดเวลา